AI in Marketing: Personalization Without Creepiness

Summary

AI-powered personalization allows marketers to deliver relevant content, recommendations, and experiences to individuals. However, excessive targeting, unclear data practices, and overly humanlike AI can raise privacy concerns and weaken trust. Research shows transparency and user control improve engagement and conversion. With privacy-by-design and opt-in data practices, brands can turn personalization into a trusted value exchange.

Key insights:

The Personalization–Privacy Paradox: Consumers want relevant experiences but become uncomfortable when personalization exposes hidden data collection.

The Uncanny Valley of Marketing: Hyper-targeting, surveillance-like retargeting, and overly human AI agents can trigger consumer distrust.

Trust Drives Conversion: Transparent AI systems increase engagement, while opaque personalization reduces customer confidence.

Transparency Improves Data Sharing: Nearly half of consumers trust brands more when AI use is openly disclosed.

Ethical AI Is Becoming Mandatory: Regulations such as GDPR and the EU AI Act are forcing responsible data use and explainability.

Privacy-First Personalization Works: Opt-in consent, explainable AI, and privacy dashboards help deliver relevance without appearing intrusive.

Introduction

The integration of artificial intelligence into marketing has transformed commercial communication, enabling brands to deliver personalized messages, recommendations, and experiences with unprecedented precision and scale. Yet this power brings challenges: as personalization grows more sophisticated, many consumers perceive it as intrusive or manipulative, blurring the line between relevance and surveillance. The central tension lies in balancing the commercial imperative to personalize with the consumer’s need to feel respected.

This insight explores how AI-driven personalization can be harnessed to build trust, examining the psychological and regulatory conditions that trigger adverse responses and offering a framework for strategies that enhance customer relationships rather than erode them.

What Is AI Personalization in Marketing?

AI-driven personalization is the application of artificial intelligence technologies, including machine learning (ML), natural language processing (NLP), and predictive analytics, to tailor marketing content, product recommendations, pricing, and customer experiences to individual users in real time.

Unlike traditional segmentation (grouping consumers by broad demographics), AI personalization operates at the level of the individual, using behavioral signals, purchase history, location data, browsing patterns, and even emotional sentiment to construct a continuously updated model of who a person is and what they want next.

Some common forms of AI personalization in marketing include:

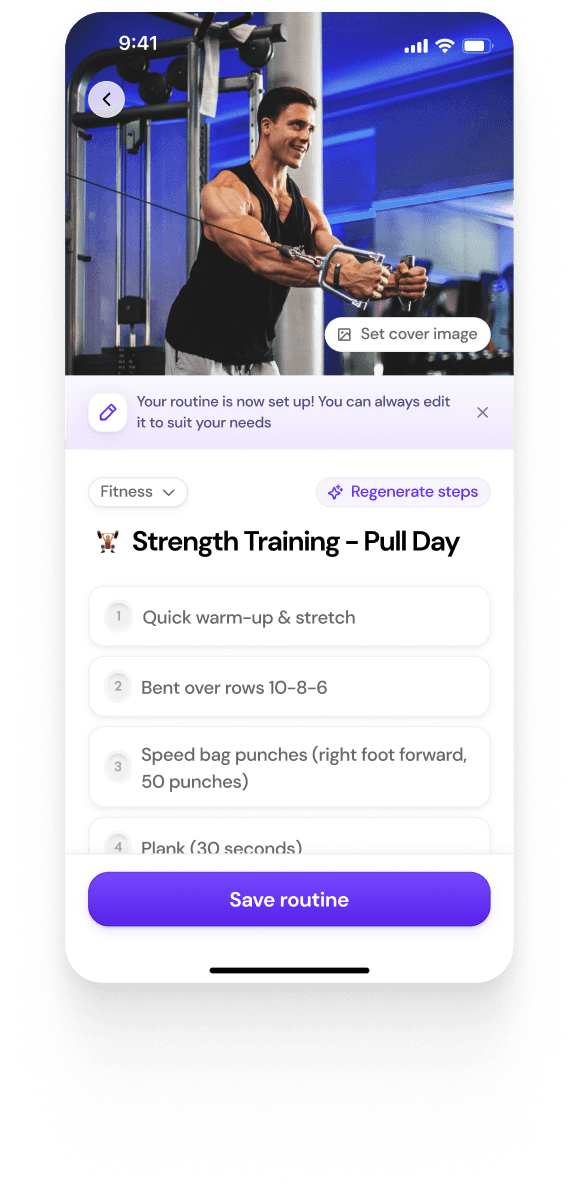

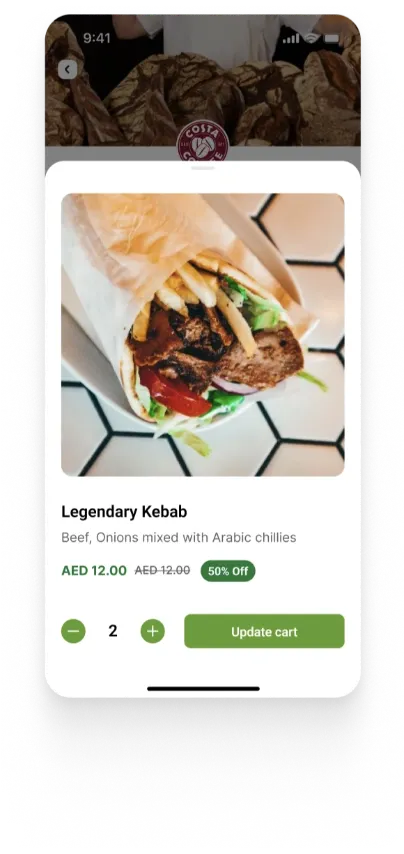

Recommendation engines: Platforms such as Netflix and Amazon deploy AI to surface content and products that a user is statistically likely to engage with, based on historical behavior and pattern-matching against similar user profiles.

Dynamic content: Email subject lines, advertising creatives, and website landing pages that adapt in real time to the specific viewer, presenting different messaging, imagery, or offers depending on the individual's inferred profile.

Predictive targeting: The deployment of advertising to consumers before they have actively expressed a need, based on behavioral pattern modeling that identifies likely future intent.

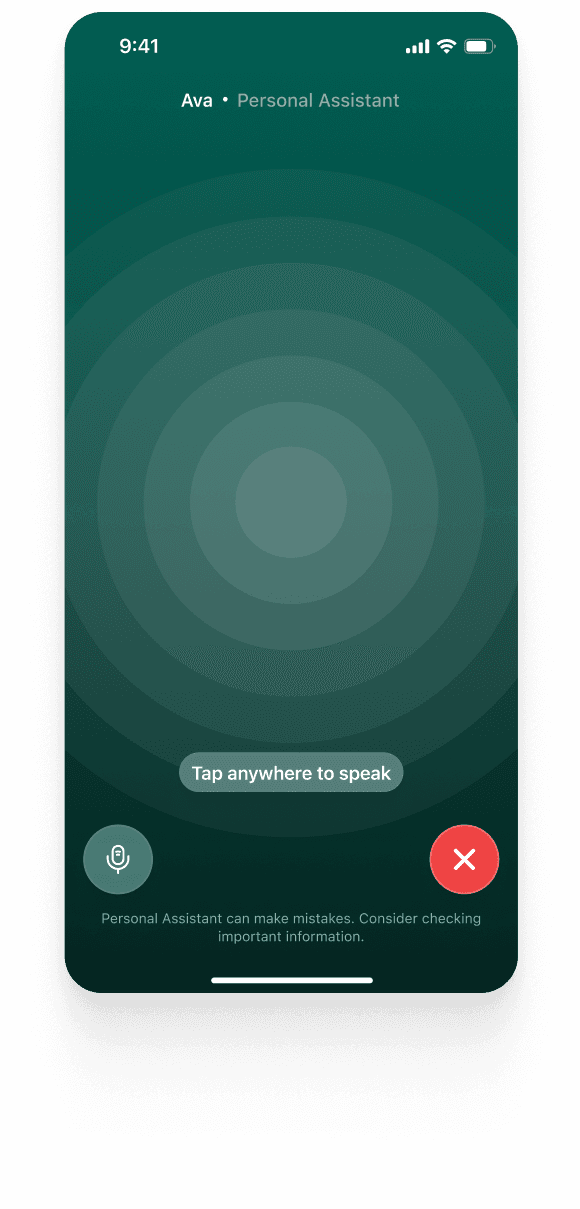

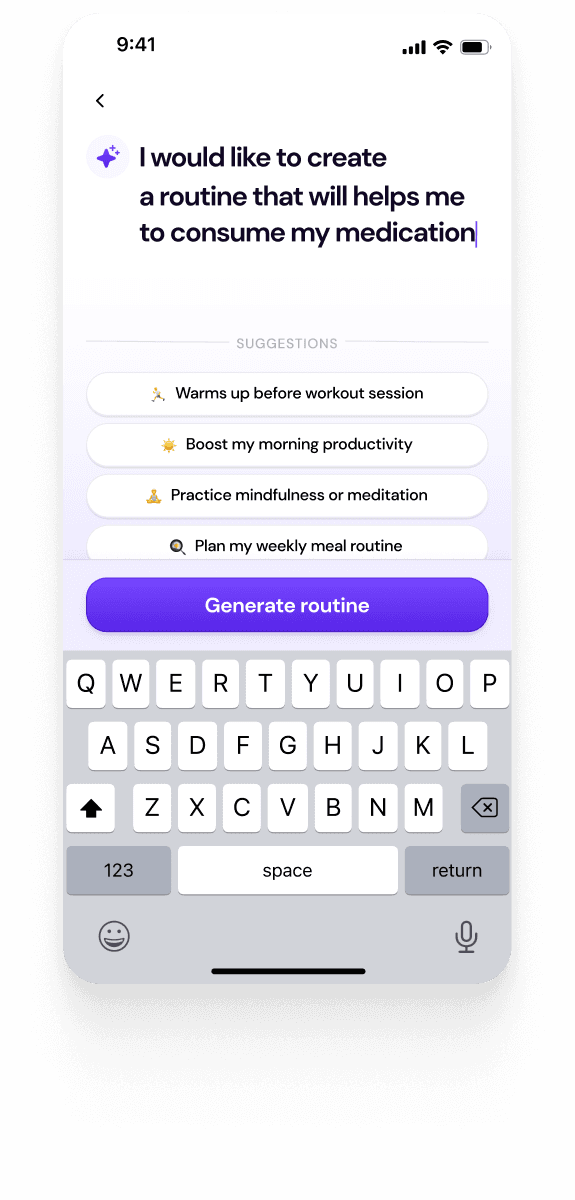

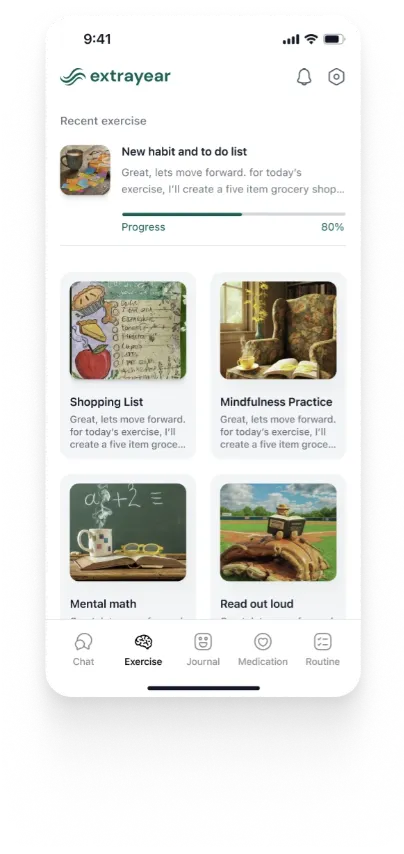

Conversational AI: Chatbots and virtual assistants that modulate their tone, language register, and commercial offers based on a user's interaction history and detected sentiment.

Dynamic pricing: Real-time price adjustment based on perceived demand, contextual urgency, or modeled willingness-to-pay at the individual level.

When executed well, this is undeniably powerful. According to McKinsey & Company, AI-powered personalization can deliver five to eight times the ROI on marketing spend and significantly boost customer engagement. The opportunity is enormous, but so is the risk.

The Personalization–Privacy Paradox

At the heart of the creepiness problem is a well-documented psychological tension researchers call the personalization–privacy paradox: consumers simultaneously value relevance and fear the misuse of their personal data.

This is not irrational. It reflects a genuine asymmetry of power. When a brand knows your purchasing habits, your location history, your health concerns, and your emotional state, and you have little visibility into how that data was obtained or how it will be used, the relationship becomes unbalanced. Personalization stops feeling like a service and starts feeling like surveillance.

Research published in the Journal of Consumer Psychology confirms that consumer trust serves as the critical mediator between personalization benefits and actual behavioral outcomes. In other words, personalization only converts when trust already exists. Without it, even the most relevantly targeted message can backfire.

A 2022 Pew Research Center survey found that 27% of Americans reported interacting with AI at least several times a day, with another 28% engaging with it once daily, often without realizing it. This ubiquity means the stakes of getting personalization wrong are higher than ever. Every misstep is experienced repeatedly, at scale.

When Personalization Becomes "Creepy": The Uncanny Valley of the Mind

The concept of the Uncanny Valley was first proposed by Japanese roboticist Masahiro Mori in 1970. His original theory described a curve in which human emotional response to humanoid robots becomes increasingly positive as they appear more human, until it reaches a tipping point, where the almost-but-not-quite-human triggers deep discomfort and revulsion.

In digital marketing, this phenomenon has evolved into what researchers now call the "Uncanny Valley of Mind", a dynamic in which highly anthropomorphized AI agents (chatbots or assistants designed to seem almost human) trigger heightened consumer privacy concerns, precisely because they seem capable of autonomous intent. In studies, consumers felt significantly more secure interacting with agents that presented as tools rather than entities.

More broadly, the Uncanny Valley translates into marketing in three distinct ways:

1. Hyper-targeting that reveals intimate knowledge: When a marketing communication references information that a consumer understands to be private, a conversation not conducted via text, a search query relating to a sensitive personal matter, or a product associated with a recent life event such as bereavement or medical concern, the brand signals that it has been watching rather than serving. The consumer's awareness of the surveillance mechanism shatters the illusion of relevance, replacing it with discomfort.

2. Over-anthropomorphized AI interactions: Brands that deploy AI chatbots designed to mimic human warmth and spontaneity risk triggering discomfort when the illusion slips, an inconsistent tone, a robotic phrasing, or a response that is technically correct but emotionally tone-deaf. Real-world examples abound: South Korea's GS25 convenience store released an AI-generated campaign in 2024 featuring distorted visuals and eerily symmetrical expressions. The public response was immediate: "creepy," "soulless," "plastic."

3. Surveillance-like targeting behavior: Retargeting ads that follow a user from platform to platform, referencing the same product repeatedly over days, can feel less like marketing and more like being followed. This surveillance, the persistent monitoring of consumer behavior for commercial gain, is among the most common triggers of consumer distrust.

The pattern across all three is consistent: creepiness emerges when AI personalization makes the mechanism visible. When consumers can see the machinery behind the curtain, the magic collapses into unease.

The Trust Equation: What the Data Says

Trust is not a soft or peripheral variable in AI-driven marketing. It serves as a hard commercial lever with direct, measurable implications for engagement, conversion, and retention.

A consistent body of research establishes the following causal chain: transparency generates trust; trust enables engagement; engagement produces conversion. When AI systems operate with demonstrable fairness and clarity, consumers are significantly more likely to transact. When algorithmic processes are opaque, confidence erodes, frequently in ways that prove difficult or impossible to reverse.

A study published in Computers in Human Behavior confirmed that AI-enabled personalization positively influences consumer trust and perceived usefulness in social media marketing environments. The same research, however, identified privacy concerns as a source of friction that significantly reduced engagement when not proactively addressed by the deploying organization.

The geographic and cultural dimensions of this dynamic are also instructive. Research cited by KPMG indicates that 72% of Chinese consumers express trust in AI-driven services, compared with only 32% of American consumers. This disparity reflects not merely cultural differences in attitudes toward data and institutional authority, but markedly different consumer expectations regarding transparency, disclosure, and the availability of meaningful control over personal data.

There is additionally a significant role for consumer AI literacy, the degree to which an individual understands, at a functional level, how recommendation algorithms and data-driven systems operate. Research documented in MDPI's Management Sciences journal confirms that consumers with higher AI literacy are substantially less likely to experience personalization as manipulative and more likely to find it genuinely useful. This has a direct strategic implication: investing in consumer education about one's AI practices is not a reputational liability. It is a trust-building asset with measurable commercial returns.

According to the Twilio 2024 State of Personalization Report, 49% of consumers say they would trust brands more if they openly disclosed their use of AI, and companies implementing ethical AI practices report 25–30% higher accuracy in personalized recommendations, with transparency-driven data sharing improving long-term customer lifetime value by 20–30%. The disclosure did not diminish the commercial effectiveness of the personalization; it amplified it by removing the ambient unease that had previously discouraged consumer engagement.

The Regulatory Landscape: No Longer Optional

The ethical case for responsible personalization now carries significant legal force, and the regulatory environment governing AI in marketing is evolving rapidly across major jurisdictions.

The EU General Data Protection Regulation (GDPR) established strict requirements for explicit consent, data minimization, and the right to erasure, fundamentally changing how European marketers can collect and deploy consumer data. The California Consumer Privacy Act (CCPA) and a growing patchwork of U.S. state-level legislation are extending similar protections across American markets.

The EU AI Act, now in force, adds another layer, requiring transparency and human oversight for AI systems used in consumer-facing applications, including marketing. Meanwhile, the U.S. Federal Trade Commission has begun actively enforcing against companies using AI to mislead consumers, and over 80% of U.S. policymakers now favor stricter data privacy rules.

For brands operating across borders, this creates complexity. But it also creates a strategic opportunity: the companies that build ethical AI marketing infrastructures proactively, not reactively, will be better positioned than those scrambling to comply after the fact.

The Association of National Advertisers' Ethics Code of Marketing Best Practices now explicitly advocates that marketers implement notice and transparency measures so consumers know what data is being collected, when, and for what purpose. This is no longer aspirational guidance. It is the direction of travel for the entire industry.

A Framework for Personalization Without Creepiness

The goal is not to abandon personalization; it is to engineer it in a way that consumers experience as value rather than violation. Here is a practical framework for doing so:

1. Adopt Privacy by Design.

Embed privacy considerations into AI systems from the ground up, not as a retrofit. This means collecting only the data that is necessary (data minimization), setting clear internal policies on how long data is retained, and building systems that make it technically impossible to misuse data rather than merely against policy.

2. Implement Explainable AI (XAI)

Explainable AI (XAI) refers to AI systems designed to communicate how they arrive at decisions in ways that non-technical users can understand. When a consumer can see in plain language that they received a product recommendation because they recently purchased a related item, the recommendation feels like a service. When there is no explanation, it feels like surveillance. Implementing XAI in consumer-facing personalization is one of the highest-leverage investments brands can make in trust.

3. Transition from Opt-Out to Opt-In Consent

Most personalization systems are architected around opt-out: data is collected by default, and consumers must take active steps to stop it. Shifting to opt-in consent, where consumers actively choose to share data in exchange for a clearly articulated benefit, generates smaller data pools but significantly higher-quality consumer relationships. Users who opt in are more engaged, more loyal, and less likely to experience personalization as intrusive.

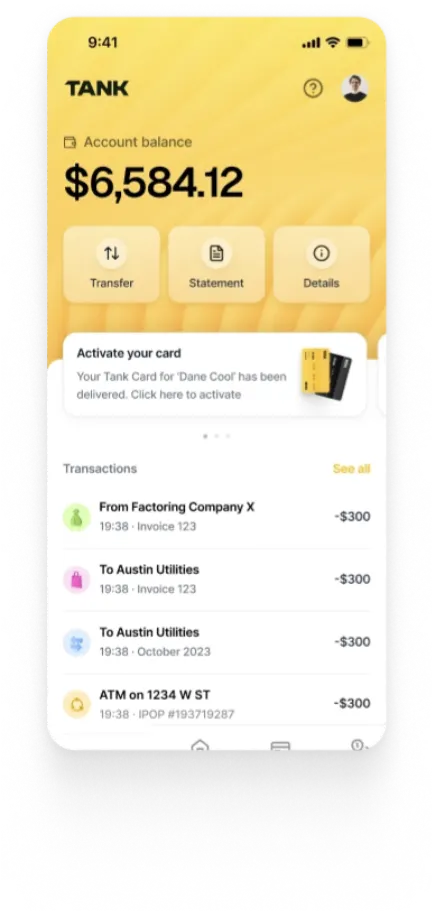

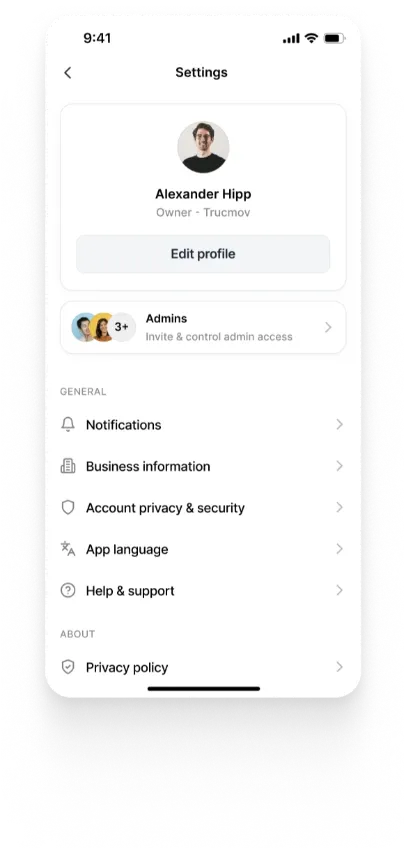

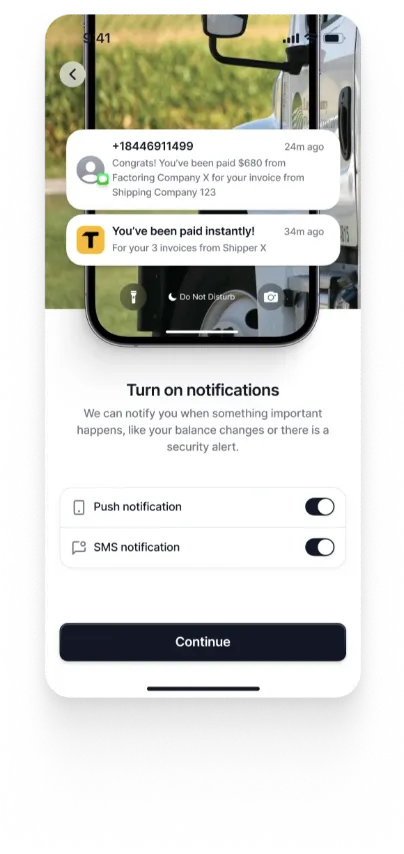

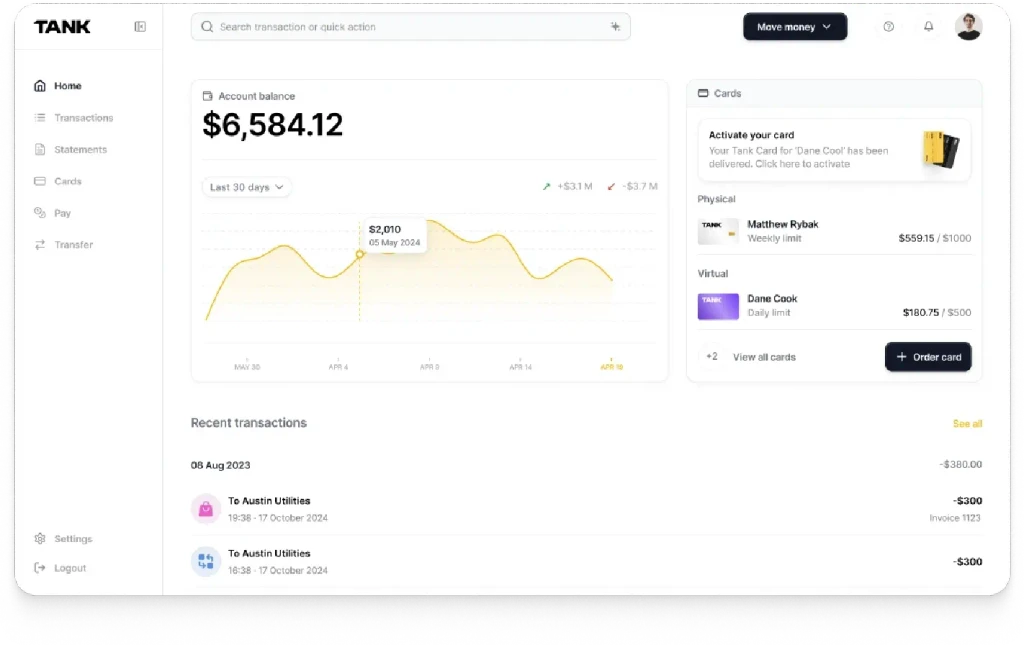

4. Build Transparency Dashboards

Give consumers a privacy dashboard, a single interface where they can see what data has been collected about them, how it is being used, which third parties have access, and how to modify or delete it. Companies like Apple have set an industry benchmark with App Tracking Transparency, demonstrating that consumer-facing privacy controls are competitive differentiators and not compliance burdens.

5. Calibrate AI Humanization Deliberately

If you deploy AI in consumer-facing interactions, chatbots, voice assistants, and email automation, resist the temptation to over-humanize. Research shows that consumers feel more comfortable with AI that presents as a capable tool rather than a pseudo-person. Transparency about AI involvement, combined with algorithmic disclosure, significantly reduces privacy anxiety without reducing effectiveness.

6. Distinguish Contextual from Intimate Personalization

There is a meaningful difference between contextual personalization (recommending winter coats in November based on a user's location) and intimate personalization (referencing a user's recent search for anxiety medication in a wellness ad). The former feels relevant. The latter feels surveilled. Establish clear internal guidelines about what categories of data are appropriate to use for targeting, and default to the less invasive option whenever the benefit is marginal.

7. Maintain Meaningful Human Oversight

AI systems in marketing should augment human decision-making, not replace it. Implement regular audits of your personalization models to identify algorithmic bias, where models may be inadvertently excluding or exploiting certain demographic groups. Appoint internal AI ethics accountability and ensure that automated decisions with significant consumer impact can be reviewed, contested, and corrected by humans.

What Great AI Personalization Looks Like

Spotify's Discover Weekly and Wrapped features are the gold standard. The platform uses AI to build deeply personalized experiences, but it does so through an explicit value exchange. Users understand that Spotify processes their listening behavior to surface music they'll love. The data collection feels proportionate to the benefit. The insight ("you listened to this artist 200 times") feels like a gift, not an exposure.

The contrast with failure cases is instructive. Coca-Cola's 2024 AI-generated holiday ad, which used generative AI to recreate its iconic "Holidays Are Coming" campaign, received immediate backlash for feeling hollow and inauthentic despite (or perhaps because of) its technical polish. Audiences rejected not the AI, but the AI used without taste, without the emotional logic and cultural clarity that make brand communication resonate.

The lesson is consistent across cases: audiences don't reject AI personalization; they reject AI personalization that prioritizes the brand's convenience over their own dignity.

The Road Ahead

The future of AI personalization will be shaped by three emerging developments:

1. Federated Learning: A technique that processes consumer data locally on individual devices rather than centralizing it on company servers, promises to enable effective personalization while radically reducing privacy exposure. As this matures, it could dissolve the personalization–privacy paradox at the infrastructure level.

2. Contextual AI: It is emerging as an alternative to behavioral tracking: rather than building persistent profiles based on who a consumer is, it serves relevant content based on what a consumer is doing right now, the article they are reading, the video they are watching. No personal data profile required.

3. Consumer AI: Consumer AI literacy will grow. As users become more sophisticated about how recommendation systems work, brands that have built transparent, trust-based personalization practices will retain their customers. Those who have not will face an increasingly skeptical audience armed with better tools to detect and reject surveillance-based marketing.

The trajectory is clear: the competitive advantage in AI marketing is shifting from who can collect the most data to who can earn the most trust.

Conclusion

AI-driven personalization isn’t inherently invasive; it’s a tool whose impact depends entirely on how responsibly it’s used. The brands that will thrive in the next era of marketing are those that treat personalization not as a covert extraction of consumer data, but as a transparent, reciprocal exchange where customers willingly share information because the value they receive is clear, meaningful, and respectful of their autonomy. With the technology already mature, regulations taking shape, and consumers more discerning than ever, the real question is whether your brand is building personalization that earns trust or crosses into discomfort. The path forward is simple: choose transparency, choose proportionality, and deliver personalization that makes people feel genuinely seen rather than surveilled.

Authors

Build AI Personalization Customers Trust

Walturn helps organizations design transparent, privacy-first AI systems that deliver intelligent personalization without compromising user trust or compliance.

References

Alexander, N. (2025, August 26). Consumer trust and perception of AI in marketing. Search Engine Journal. https://www.searchenginejournal.com/consumer-trust-and-perception-of-ai-in-marketing/553598/

Balancing Personalization and Privacy in AI-Enabled Marketing Consumer Trust, Regulatory Impact, and Strategic Implications – A Qualitative Study using NVivo. (2025, October 14). Advances in Consumer Research. https://acr-journal.com/article/balancing-personalization-and-privacy-in-ai-enabled-marketing-consumer-trust-regulatory-impact-and-strategic-implications-a-qualitative-study-using-nvivo-1633/

Rose, R. (2026, January 15). AI ads and the uncanny valley problem. Campaign India. https://www.campaignindia.in/article/ai-ads-and-the-uncanny-valley-problem/4rjnmc432wsbs1r1pxyk7eywxj

Hardcastle, K., Vorster, L., & Brown, D. M. (2025). Understanding customer responses to AI-Driven personalized journeys: impacts on the customer experience. Journal of Advertising, 54(2), 176–195. https://doi.org/10.1080/00913367.2025.2460985

Kibby, C. (2025, May 21). The ethical use of AI in advertising | IAPP. IAPP.org. https://iapp.org/news/a/the-ethical-use-of-ai-in-advertising

Team, S., & Team, S. (2026, January 14). Ethical AI in Marketing: Guide to Responsible, Transparent, and Trustworthy AI Use 2026. Seize Marketing Agency. https://seizemarketingagency.com/ethical-ai-in-marketing/