How Companies Are Using AI for Internal Knowledge Management

Summary

AI is reshaping enterprise knowledge management by transforming static documentation into intelligent systems that retrieve, synthesize, and update information in real time. Using technologies like semantic search, vector databases, RAG architectures, and agentic AI, companies can drastically reduce time spent searching for information while improving onboarding, R&D productivity, and organizational learning.

Key insights:

AI-Powered Search: Natural-language search combined with semantic retrieval helps employees find precise answers instead of manually searching documents.

RAG for Reliable Knowledge: Retrieval-Augmented Generation grounds AI responses in internal documents, improving accuracy and reducing hallucinations.

Autonomous Knowledge Agents: Agentic AI systems can ingest documents, summarize meetings, update records, and trigger workflows automatically.

Faster Onboarding and Training: AI assistants give new hires instant access to policies, processes, and expertise, reducing training time and support burden.

Enterprise-Scale Deployments: Organizations like EY and Deloitte are deploying tens of thousands of AI agents to manage institutional knowledge.

Data and Governance Challenges: Integration with legacy systems, data quality issues, and access control remain major barriers to successful adoption.

Introduction

Every organization, whether a small startup or a global enterprise, runs on knowledge. Yet when information is scattered across countless documents, policies, guides, and SaaS tools, it becomes more of a burden than an asset. Employees spend nearly two hours a day just searching for what they need, while information overload drains productivity, and traditional knowledge management systems fall short. Artificial intelligence is changing this reality by not only storing knowledge but also organizing, interpreting, and updating it in real time, creating intelligent infrastructure that helps people find answers quickly and work smarter.

Definitions

Before exploring how companies are deploying AI for knowledge management, it helps to ground the key concepts driving this space.

1. Knowledge Management (KM)

Knowledge Management is the process by which organizations create, capture, distribute, and effectively use knowledge assets. KM encompasses everything from internal documentation and process wikis to the tacit expertise held by individual employees; the unwritten, experiential know-how that lives in people's heads and is extremely difficult to transfer.

2. Knowledge Management System (KMS)

A Knowledge Management System is an organized platform or toolset that helps businesses collect, store, and share information, whether documents, customer data, procedures, or product specifications. Traditional KMS platforms were passive repositories. Modern AI-powered ones are active intelligence systems that retrieve, synthesize, and update knowledge automatically.

3. Retrieval-Augmented Generation (RAG)

RAG is the dominant architectural approach for enterprise AI knowledge systems. It combines a Large Language Model (LLM) with a company's private knowledge base. When a user asks a question, the system first retrieves relevant internal documents, then uses the LLM to generate a grounded, accurate answer, dramatically reducing hallucination risk and ensuring responses are traceable to specific internal sources. This is especially critical in regulated industries where compliance and auditability are non-negotiable.

4. Semantic Search and Vector Databases

Traditional keyword-based search returns results based on exact string matches. Semantic search understands intent, not just keywords. It uses vector embeddings, mathematical representations of content meaning, stored in specialized databases (such as Pinecone, Weaviate, or pgvector) to retrieve information based on conceptual similarity. An employee searching "how do I expense international travel" finds the relevant policy even if the document uses the phrase "global reimbursement procedures."

5. Agentic AI

Agentic AI refers to AI systems capable of autonomously executing multi-step workflows with minimal human intervention. In a knowledge management context, agents can ingest new documents, update existing records, flag outdated content, summarize meeting transcripts, and proactively surface relevant information to the right people, all without being explicitly prompted.

6. Tacit Knowledge

Tacit knowledge is the informal, unstructured expertise that lives in people's heads rather than in documents, the "how we really do things here" knowledge. It's the most valuable and most elusive type of organizational knowledge. One of AI's most significant contributions to KM is helping surface, capture, and make this previously untrackable knowledge accessible at scale.

How Companies Are Using AI for Internal Knowledge Management

The practical applications of AI in enterprise knowledge management span the full spectrum, from automating search to entirely replacing manual documentation workflows. Here are the most impactful patterns emerging across industries.

1. AI-Powered Internal Search and Retrieval

Intelligent search is the most widely adopted use case, allowing employees to ask questions in natural language and receive direct, context-aware answers instead of sifting through documents. Notion’s 2025 release of Notion 3.0 introduced enterprise-wide semantic search, and Affirm quickly replaced its standalone tool after seeing immediate productivity gains. The same pattern is evident across companies using Microsoft 365 Copilot, which surfaces relevant documents, emails, and meeting notes directly within Word, Outlook, and Teams, eliminating the need to switch tools to find information.

2. Autonomous Knowledge Agents

Agentic AI systems are moving beyond search to proactively manage knowledge, ingesting new information, updating records, and triggering workflows without human prompting. Deloitte’s 2026 State of AI in Enterprise report cites a financial services firm using AI agents to capture meeting action items, draft follow-ups, and track completion, turning fleeting conversations into organized, actionable records.

Notion 3.0 rebuilt its AI layer around autonomous agents, and Ramp’s Head of AI & Ops, Ben Levick, noted the company can now spin up systems in minutes instead of hours, deploying agents to power entirely new workflows at scale.

3. Onboarding Acceleration and Training Enhancement

Onboarding is one of knowledge management’s toughest challenges, with new hires expected to absorb months of institutional knowledge in weeks. Generative AI chatbots are easing this burden by giving employees instant access to answers across HR, technical, and product domains, reducing time lost in documentation and freeing senior staff from repetitive questions. Master of Code Global built such a system for a leading tech company via Slack, while a major bank deployed a Microsoft Teams–based assistant that delivers policy and compliance information in seconds instead of hours.

4. R&D Knowledge Automation with RAG Frameworks

In research-intensive industries, duplication of effort is a costly and often invisible problem, with R&D teams re-investigating issues already solved elsewhere simply because prior work is hard to find. Deloitte India documented this with a leading manufacturer that deployed a RAG framework across its internal knowledge base, enabling engineers to query years of research in natural language. Instead of multi-day archive searches, relevant findings are surfaced and synthesized in minutes, turning hidden knowledge into a readily accessible asset.

5. EY's AI Center of Excellence

Ernst & Young provides one of the most impressive enterprise-scale deployments. EY established an internal AI Center of Excellence with formal governance policies for responsible AI use across the firm. By 2025, EY had documented 30 million AI-enabled processes internally and deployed 41,000 AI agents in production, not as a pilot, but as a full-scale transformation of how institutional knowledge is created, maintained, and accessed across a global workforce.

6. Deloitte's PairD: Internal AI at 100,000 Employees

Deloitte developed PairD, an internal generative AI platform first deployed to 75,000 employees in December 2023 and scaled to 100,000 by mid-2024. PairD enables staff to automate project planning, prioritize tasks, draft content, and access organizational knowledge, all within Deloitte's secure internal environment. The platform exemplifies a growing enterprise pattern: rather than relying on general-purpose consumer AI tools, large organizations are building or licensing purpose-built internal knowledge AI systems with governance embedded from the start.

7. Microsoft 365 Copilot and Copilot Tuning

Microsoft’s approach embeds AI knowledge retrieval directly into the tools employees already use, eliminating the need for separate systems. Microsoft 365 Copilot, integrated across Word, Excel, PowerPoint, Outlook, and Teams, can summarize meetings, surface past documents, draft context-aware communications, and analyze internal data within the applications employees already work in. At Build 2025, Microsoft introduced Copilot Tuning, enabling organizations to fine-tune Copilot on proprietary data for domain-specific tasks, making organizational knowledge central to AI behavior rather than an afterthought.

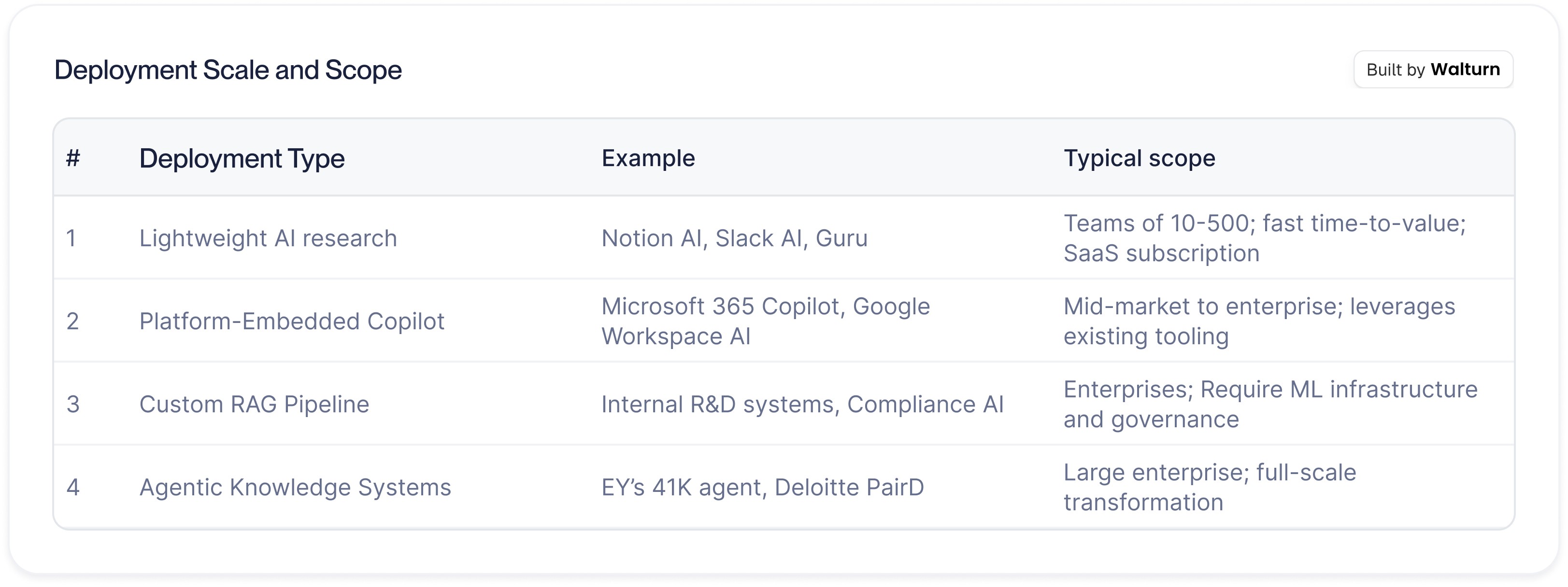

Deployment Scale and Scope

The following provides a sense of how different types of AI knowledge management deployments vary in scope and investment:

The Technical Stack Powering AI Knowledge Management

Understanding the technical architecture helps product and engineering leaders make informed build-vs-buy decisions.

NLP and Semantic Search: The foundation layer. NLP enables employees to query systems in plain language; semantic search retrieves based on conceptual relevance rather than keyword matches. NLP-based conversational search is growing at a 21.2% CAGR through 2030, according to GoSearch.

Retrieval-Augmented Generation (RAG): The dominant enterprise architecture. RAG grounds LLM responses in actual internal documents, dramatically reducing hallucinations and ensuring answers are traceable to specific sources.

Vector Databases: Specialized databases (Pinecone, Weaviate, pgvector) that store content as mathematical embeddings and enable fast similarity search, the retrieval backbone of most enterprise RAG implementations.

Machine Learning for Continuous Improvement: Effective AI knowledge systems learn from usage patterns over time, compounding their value as the system is used more widely.

Agentic Frameworks: Orchestration layers built on models like Claude, GPT-4, and Gemini that enable AI systems to autonomously execute multi-step knowledge workflows; ingesting new information, resolving document conflicts, flagging outdated content, and proactively delivering relevant knowledge.

The Real Challenges: What Companies Get Wrong

Despite the compelling promise, implementation is not without friction. The difficult questions aren't primarily about AI capability; modern AI is demonstrably capable, but about organizational readiness, data quality, and governance.

1. Legacy System Integration

Nearly 60% of AI leaders cite legacy system integration as their primary adoption challenge. Decades of enterprise knowledge are often locked in formats, databases, and systems that are difficult to ingest into modern AI platforms. Before AI can retrieve knowledge, that knowledge must first be accessible and structured, a significant undertaking that is often underestimated.

2. Data Quality

AI knowledge systems are only as good as the content they ingest. DMG Consulting's research found that conflicting, outdated, and missing information has historically been the primary impediment to effective KMS platforms. AI can help identify these issues, but initial data remediation still requires significant human effort.

3. Cultural Adoption

Deloitte's 2026 enterprise AI survey found that the AI skills gap is seen as the biggest barrier to integration, and that education was the top way companies adjusted their talent strategies due to AI. Without deliberate change management, even the best-designed knowledge AI sits unused. People need to trust the system, understand how to interact with it effectively, and see it integrate naturally into their existing workflows.

4. Governance and Access Control

Internal knowledge often contains sensitive information: personnel records, unreleased product plans, legal communications, and financial data. AI knowledge systems must implement granular permission structures, ensuring that the AI surfaces only what a given employee is authorized to see. Notion's 3.0 architecture addressed this directly with page-level access controls that ensure knowledge agents respect the same permission hierarchies as the underlying content.

Build, Buy, or Integrate?

As enterprises shift from experimentation to production, the build-versus-buy calculus is evolving rapidly. GoSearch data shows that enterprise adoption shifted from a roughly 50/50 build-vs-buy split in 2024 to purchasing 76% of AI knowledge solutions in 2025, driven by the speed at which pre-built solutions reach production relative to in-house development.

The right answer depends on three variables:

Data Sensitivity: The more sensitive your internal knowledge, the stronger the case for building internally or using on-premise deployments where data never leaves your environment.

Integration Complexity: The more complex your existing tool ecosystem, the more valuable a platform with deep native integrations (e.g., Microsoft 365, Slack, Notion) over a standalone custom build.

Time-to-Value Urgency: Platforms like Microsoft 365 Copilot and Notion AI can be activated in days. Custom RAG implementations typically take months, but deliver far greater control and domain specificity.

For most mid-market and enterprise organizations, the practical path is hybrid: adopt a proven AI knowledge platform for broad internal use while building custom RAG pipelines for highly proprietary or regulated knowledge domains that require maximum control and auditability.

What's Coming Next

The trajectory of AI in enterprise knowledge management is clear: systems are moving from passive retrieval to proactive, self-maintaining intelligence. Three near-term developments will define the next phase:

Multimodal Knowledge Ingestion: Future systems will ingest not just text but audio (meeting recordings), video (training content), and images (diagrams, whiteboards), capturing forms of organizational knowledge that were previously invisible to any KMS.

Self-Updating Knowledge Bases: KMWorld's 2025 AI 100 report highlights self-updating knowledge bases as a defining trend, systems that continuously incorporate new information, initiate version control, and proactively deprecate outdated content without manual intervention.

Knowledge Graphs: Structured representations of organizational knowledge that map relationships between concepts, people, processes, and documents. GoSearch data shows knowledge graphs reduce issue resolution time by 28.6% through more precise, personalized information delivery.

Agentic Governance at Scale: Deloitte's research notes that only one in five companies currently has a mature model for governing autonomous AI agents. The next major wave of enterprise AI investment will be in governance frameworks that allow agentic knowledge systems to operate safely, reliably, and accountably.

Conclusion

The companies that succeed with AI in knowledge management share a mindset: they treat organizational knowledge as a product that must be designed, maintained, and improved. AI does not eliminate the work but makes it manageable, automating tasks that once required entire teams and reducing searches that took half an hour to just seconds. Organizations that invest in clean data, strong platform architecture, and cultural practices that drive adoption will build lasting advantages, as every interaction makes the system smarter and every document ingested makes it more complete. Once the knowledge flywheel is in motion, it becomes a powerful force that competitors struggle to match.

Authors

References

Brandonmost, Brandonmost, & Brandonmost. (2026, January 21). What Is Enterprise AI Knowledge Management? 2026 Guide, FAQ & Trends. GoSearch FAQs + Answers. https://www.gosearch.ai/faqs/enterprise-ai-knowledge-management-guide-2026/

The State of AI in the Enterprise 2026 AI report. (2026, February 26). Deloitte. https://www.deloitte.com/global/en/issues/generative-ai/state-of-ai-in-enterprise.html

KMWorld 100 Companies that Matter in Knowledge Management 2025. (2025, March 10). KMWorld. https://www.kmworld.com/Articles/Editorial/Features/KMWorld-100-Companies-That-Matter-in-Knowledge-Management-2025-167500.aspx

September 18, 2025 – Notion 3.0: Agents. (2025, September 18). Notion. https://www.notion.com/releases/2025-09-18

Revolutionising R&D: How Generative AI turbocharged knowledge management for a leading manufacturer. (2024, September 8). Deloitte. https://www.deloitte.com/in/en/what-we-do/case-studies-collection/how-generative-ai-turbocharged-knowledge-management-for-a-leading-manufacturer.html

Pohrebniyak, I., & Pohrebniyak, I. (2025, November 14). AI for Knowledge Management: Powering The Wind Beneath Your Business Wings. Master of Code Global. https://masterofcode.com/blog/ai-for-knowledge-management