Velocity and Stability in AI-Assisted Software Development

Summary

AI accelerates software development, but speed without structural governance introduces fragility. Software systems are complex, tightly coupled ecosystems where increased velocity can amplify drift, defects, and cognitive overload. Velocity–Stability Coupling Theory reframes AI as a gain amplifier, arguing that sustainable acceleration requires bounded speed, expanded absorptive capacity, and disciplined governance.

Key insights:

Velocity–Stability Coupling: Speed and system resilience are nonlinearly linked; beyond a threshold, more velocity reduces stability.

AI as Gain Amplifier: AI accelerates structural change, amplifying both productivity and fragility.

Local Correctness Fallacy: Passing tests does not guarantee architectural coherence.

Cognitive Saturation Limit: Human review capacity does not scale with AI output.

Churn–Defect Correlation: High modification rates correlate with defect density and drift.

Distributional Fragility: Acceleration exposes weaknesses under evolving conditions.

Stability Envelope Constraint: Every system has bounded safe velocity limits.

Absorptive Capacity Expansion: Sustainable AI requires stronger testing, modularity, and governance.

Measurement Bias Risk: Throughput metrics obscure long-term degradation.

Governed Acceleration Principle: The goal is controlled speed, not maximum speed.

Introduction

Software engineering has historically evolved through successive abstractions that reduce friction in development workflows. Structured programming reduced cognitive overload. Object orientation enabled modular thinking. Continuous integration shortened feedback cycles. DevOps dissolved silos between development and operations. Each transformation increased velocity while attempting to preserve reliability. Artificial Intelligence now represents the most powerful abstraction layer yet introduced. Large language models can synthesize code fragments, propose refactors, generate tests, and document interfaces within seconds.

Empirical evidence demonstrates measurable gains in short-horizon productivity tasks. However, productivity in constrained experimental settings does not automatically translate into sustainable system evolution. Software ecosystems are complex adaptive systems. Architectural dependencies intertwine modules. Organizational processes interact with technical design. Cognitive review practices shape code quality.

When velocity increases without structural reinforcement, the rate of change alters system dynamics in nonlinear ways. The fundamental question is not whether AI can accelerate development. It demonstrably can. The deeper question is whether acceleration can coexist with structural resilience.

The Problem of Linear Productivity Assumptions

The prevailing narrative equates speed with progress. Increased throughput is presumed to correlate directly with value creation. This assumption rests on a linear productivity model that fails to capture complex system behavior. In tightly coupled systems, an increasing rate of change can amplify fragility rather than reduce friction. Software ecosystems exhibit high dependency density, iterative modification cycles, and cognitive bottlenecks. When acceleration exceeds absorptive capacity, instability accumulates invisibly until it manifests as failure. A rigorous systems-theoretic reframing is therefore required.

Empirical Foundations from Software Engineering Research

1. Code Churn and Defect Density

Empirical software engineering research consistently demonstrates that high code churn correlates with defect density. Modules that undergo frequent modifications are more likely to experience post-release bugs. Churn increases the likelihood of unintended interactions, especially in tightly coupled systems. When AI tools increase generation speed, churn frequency rises accordingly. Even when individual contributions are locally correct, aggregate churn introduces systemic instability.

Research on technical debt accumulation further reinforces this concern. Debt accumulation often follows nonlinear trajectories when velocity is high and governance is weak. Short-term feature delivery gains can mask long-term structural liabilities. AI-generated code may accelerate feature velocity while also accelerating architectural shortcuts. Over successive iterations, compounded shortcuts increase maintenance complexity and the frequency of refactors. Velocity–Stability Coupling Theory predicts precisely such nonlinear debt growth under unbounded acceleration.

2. Architectural Erosion

Architectural erosion occurs when incremental modifications degrade systemic coherence. Design intent becomes diluted through successive local optimizations. AI-generated code optimizes for statistical plausibility rather than architectural intentionality. Without governance mechanisms enforcing systemic alignment, locally valid suggestions may conflict with global invariants. Over time, this drift accumulates into structural incoherence.

3. Automation Bias and Cognitive Saturation

Human-automation interaction research demonstrates that automation alters cognitive engagement. Fluent AI output reduces perceived need for deep scrutiny. Under velocity amplification, developers transition from generative reasoning to rapid inspection. Cognitive bandwidth becomes the limiting variable. When AI output exceeds review capacity, oversight quality declines nonlinearly. This saturation effect is central to modeling absorptive capacity.

Velocity–Stability Coupling Theory

1. Core Proposition

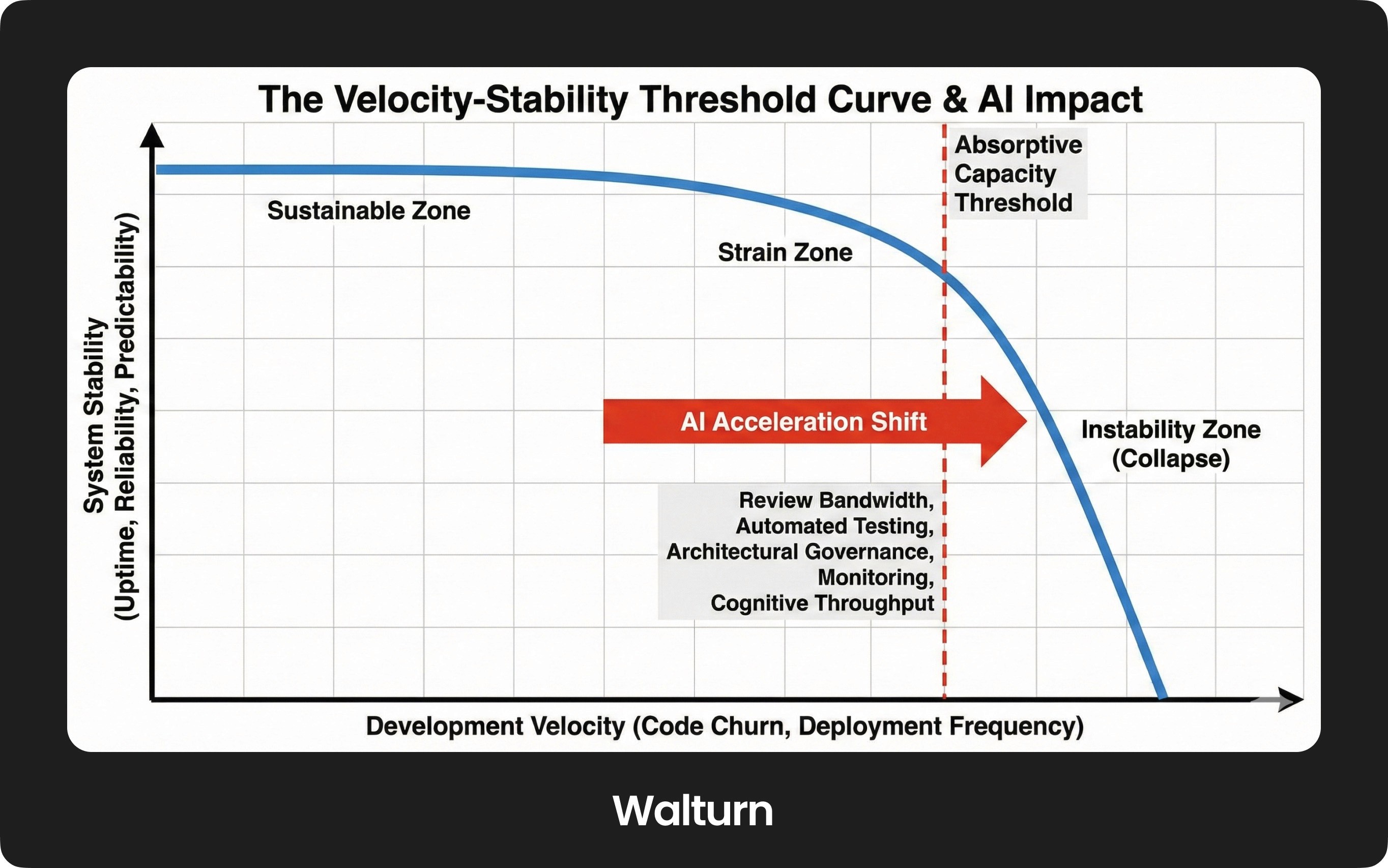

Velocity–Stability Coupling Theory posits that development velocity and structural stability are nonlinearly coupled within software ecosystems. Below a defined threshold, increases in velocity produce productivity gains without destabilization. Beyond that threshold, fragility increases disproportionately. AI functions as a gain amplifier, driving systems toward instability more rapidly when unbounded.

2. Formal Model Abstraction 1: Velocity–Absorptive Capacity Curve

Imagine a curve with development velocity on the horizontal axis and system stability on the vertical axis. At low velocity, stability remains high. As velocity approaches absorptive capacity, stability begins to decline gradually. Once velocity exceeds absorptive capacity, stability declines sharply. Absorptive capacity includes review bandwidth, automated testing robustness, architectural governance, monitoring infrastructure, and cognitive throughput. AI shifts the system to the right along this curve. Without proportional expansion of absorptive capacity, the instability threshold is crossed.

3. Formal Model Abstraction 2: Drift Amplification Loop

In the Drift Amplification Loop, AI increases output velocity. Increased velocity raises code churn. Elevated churn accelerates architectural drift. Drift reduces coherence, increasing defect probability. Defects require reactive patches, further increasing churn. This positive feedback loop accelerates under AI amplification. Governance mechanisms must dampen this loop.

4. Formal Model Abstraction 3: Stability Envelope Model

The Stability Envelope defines the bounded region within which velocity fluctuations do not destabilize the system. Governance expands the envelope. Structural neglect contracts it. Sustainable acceleration requires operating within this envelope. Velocity maximization without envelope expansion guarantees fragility.

Extended Empirical Integration and Theoretical Advancement

1. Longitudinal Evidence and Nonlinear Fragility

Longitudinal repository studies show that high modification frequency predicts future instability clusters. Defects are not randomly distributed but concentrate in high-churn regions. When AI increases churn frequency system-wide, the risk of defect clustering increases. Nonlinear fragility emerges when correlated modifications affect central dependency nodes.

Network-based analyses of software dependencies reveal that central modules act as structural keystones. AI suggestions applied disproportionately to such modules can increase systemic vulnerability. Velocity–Stability Coupling Theory predicts a steeper decline in stability in highly connected regions.

2. Cognitive Throughput as a Quantitative Variable

Absorptive capacity includes measurable variables. Review latency, test coverage density, and mean time to defect detection can be quantified. Cognitive load studies demonstrate that inspection accuracy declines when task density increases. AI increases task density. When review throughput exceeds the limit, oversight degradation accelerates.

3. Measurement Bias and Metric Fixation

Velocity-centric metrics, such as reduced cycle time, create a structural form of blindness. Architectural coherence and long-term maintainability are less visible and more challenging to quantify. Metric fixation incentivizes superficial productivity while masking debt accumulation. Stability metrics such as architectural entropy and rollback frequency must complement velocity metrics.

Boundary Conditions and Failure Modes

1. Distributional Shift

AI models trained on historical corpora may misalign with evolving frameworks. Under a distributional shift, the quality of suggestions declines. Acceleration amplifies misalignment. Stability declines faster when shift conditions coincide with high velocity.

2. Temporal Drift

Dependencies evolve continuously. AI suggestions may reference outdated patterns. Under high velocity, obsolescence propagates rapidly, and stability envelopes contract when temporal recalibration lags behind acceleration.

3. Organizational Heterogeneity

Absorptive capacity varies by organization. Mature DevSecOps pipelines widen stability envelopes. Weak governance narrows them. Uniform acceleration strategies ignore contextual heterogeneity.

Dynamic Stability Envelope Expansion

Absorptive capacity can be expanded deliberately. Investments in test coverage, static analysis, modular refactoring, and developer training widen stability envelopes. AI adoption should follow structural reinforcement. Phased integration reduces premature instability. Dynamic Stability Envelope Expansion formalizes this process:

Phase one: Strengthen absorptive capacity.

Phase two: Introduce bounded acceleration.

Phase three: Monitor drift and recalibrate velocity.

This phased approach aligns acceleration with resilience engineering principles.

Research Program and Testable Hypotheses

Velocity–Stability Coupling Theory generates empirically testable hypotheses:

Defect density increases nonlinearly beyond velocity thresholds.

Architectural drift correlates with AI usage density when absorptive capacity remains constant.

Automation bias increases under compressed review cycles.

Stability envelope width predicts sustainable acceleration range.

Longitudinal multi-repository data combined with cognitive experiments can test these predictions. Mixed-method research designs are required to integrate quantitative churn metrics with qualitative architectural assessment.

Corporate Programming Environments and the Governance of Acceleration

1. Enterprise Codebases as High-Coupling Socio-Technical Systems

Corporate programming environments differ fundamentally from experimental or greenfield development contexts. Enterprise systems are rarely modular in the idealized sense described in architectural textbooks. They are deeply interwoven with legacy services, regulatory compliance layers, cross-team dependencies, and operational monitoring pipelines. The dependency graph of an extensive enterprise application often spans hundreds of services and thousands of integration points. In such ecosystems, even minor modifications can propagate unpredictably through tightly coupled interfaces.

Velocity–Stability Coupling Theory predicts that instability thresholds shift to the left in high-coupling environments. In other words, the amount of acceleration that can be safely absorbed decreases as structural complexity increases. AI-assisted development in corporate programming contexts, therefore, operates within narrower stability envelopes than in small-scale or low-dependency environments. The same generative output that appears benign in an isolated repository may introduce cascading failures in a regulated, multi-system architecture.

2. Legacy Systems and Path Dependence

Corporate codebases are frequently shaped by years or decades of incremental modification. Path dependence constrains architectural flexibility. Decisions made under historical constraints persist as structural residues in present systems. AI systems trained primarily on modern open-source conventions may generate patterns that conflict with these legacy architectures.

Under the Velocity–Stability Coupling Theory, legacy path dependence increases the sensitivity of drift amplification. AI-generated local optimizations that ignore historical constraints can destabilize brittle subsystems. In enterprise contexts, architectural erosion does not merely increase maintenance burden; it can interrupt revenue-critical workflows or violate contractual obligations. Acceleration must therefore be governed more conservatively in path-dependent systems.

3. Compliance, Auditability, and Traceability Constraints

Corporate programming often operates under compliance frameworks that require traceability, audit logs, reproducibility, and documentation fidelity. Financial services, healthcare, defense, and infrastructure industries impose strict validation protocols. AI-generated code introduces epistemic opacity. The reasoning behind generated structures may not be explicitly documented or reproducible in traditional audit terms.

Velocity–Stability Coupling Theory expands absorptive capacity to include compliance verification bandwidth. In regulated environments, absorptive capacity extends beyond technical validation. It includes documentation review, security audit, and regulatory attestation processes. Acceleration without proportional expansion of these verification layers narrows the stability envelope sharply. In extreme cases, compliance breaches may arise not from code defects but from insufficient transparency into provenance.

4. Organizational Incentives and Metric Distortion

Performance metrics and incentive systems shape corporate programming environments. Teams are often evaluated on delivery velocity, feature throughput, or sprint completion rates. AI integration can artificially inflate these metrics in the short term. However, metric fixation may incentivize superficial productivity at the expense of architectural health.

Empirical organizational research demonstrates that when performance measures become targets, they distort behavior. Under acceleration-first incentive structures, developers may overutilize AI tools to maximize visible output. This behavior increases code churn and architectural drift. Velocity–Stability Coupling Theory predicts that such metric-driven acceleration accelerates instability unless counterbalanced by stability-aware governance metrics.

To mitigate this distortion, corporate environments must integrate stability indicators into performance frameworks. These include defect clustering metrics, rollback frequency, architectural refactor ratios, and entropy measures. Without such a balance, incentive systems become instability multipliers.

5. Cross-Team Coordination and Dependency Cascades

A single team rarely owns enterprise software systems. Cross-functional dependencies introduce coordination complexity. AI-generated changes may inadvertently violate implicit interface contracts between teams. When velocity increases, coordination latency becomes a bottleneck. Changes propagate faster than communication channels can absorb them.

Velocity–Stability Coupling Theory conceptualizes cross-team coordination as part of absorptive capacity. Communication bandwidth, documentation clarity, and transparency into dependencies influence how quickly changes can be safely integrated. AI amplification reduces the time between change generation and integration, compressing coordination windows. If communication processes remain static while velocity increases, misalignment cascades become more probable.

6. Security Surfaces and Attack Amplification

Corporate programming introduces security as a first-class constraint. AI-generated code may inadvertently introduce vulnerabilities, particularly when suggestions reference outdated libraries or insecure patterns. High-velocity integration cycles reduce the time available for deep security review. Under a distributional shift, AI may produce syntactically correct but semantically insecure code.

Security engineering research consistently demonstrates that vulnerability density correlates with modification frequency in high-churn modules. When AI accelerates churn without parallel investment in automated security analysis, attack surfaces expand. Velocity–Stability Coupling Theory, therefore, predicts amplified security fragility under unbounded acceleration. The Controlled Acceleration Architecture must integrate automated security scanning, dependency validation, and staged rollouts to prevent systemic exposure.

7. Cultural Adaptation and Cognitive Reconfiguration

Corporate programming is not purely technical. It is cultural. The introduction of AI alters cognitive roles within teams. Developers shift from creators to validators. Senior engineers transition from architecture authors to governance overseers. Without deliberate cultural recalibration, role ambiguity may emerge.

Cognitive reconfiguration under acceleration can lead to epistemic drift, in which understanding of system behavior becomes fragmented. In large enterprises, institutional knowledge is already distributed across teams. AI-generated abstractions may further obscure reasoning chains. Stability governance must therefore incorporate knowledge preservation strategies, including structured documentation augmentation and architectural review boards.

8. Corporate Stability Envelope Modeling

In enterprise environments, the Stability Envelope Model becomes especially critical because the safe operating range for acceleration is inherently narrower. This contraction occurs due to high coupling density across systems, stringent regulatory compliance requirements, entrenched legacy path dependence, cross-team coordination constraints, and elevated security exposure risks. In such contexts, even modest increases in development velocity can propagate widely and unpredictably, amplifying systemic fragility.

Expanding this envelope requires deliberate and sustained investment in absorptive capacity rather than incremental tooling adjustments. Organizations must strengthen advanced CI/CD validation pipelines, deepen and broaden automated test coverage, and implement robust static and dynamic analysis mechanisms to detect instability early. Equally important is reinforcing cross-team interface documentation and formal architectural review governance to ensure that accelerated change remains coherent across organizational boundaries. Only through these coordinated structural investments can enterprise systems safely accommodate greater AI-driven acceleration. Acceleration must be phased relative to these reinforcements. Immediate large-scale AI deployment in brittle enterprise environments increases the probability of systemic instability.

Corporate Implications Within Velocity–Stability Coupling Theory

Corporate programming environments represent high-stakes instantiations of Velocity–Stability Coupling Theory. The nonlinear coupling between velocity and stability is more pronounced under complexity, compliance, and coupling density. AI integration without structural recalibration risks amplifying hidden fragilities.

The theoretical implication is profound. AI is not merely a productivity enhancer in corporate programming. It is a structural force multiplier. In tightly coupled enterprise ecosystems, amplification effects are magnified. Stability thresholds are lower. Drift amplification loops intensify more rapidly. Governance failures propagate more widely.

Therefore, the safe use of AI in corporate programming requires deliberate velocity modulation informed by absorptive capacity metrics rather than unbounded acceleration. Governance frameworks must balance throughput indicators with stability-oriented metrics to prevent productivity incentives from overwhelming structural integrity. Organizations must embed architectural containment mechanisms that localize potential failures and reduce blast radius under rapid change. Security-aware integration pipelines must operate as first-class safeguards, continuously scanning for vulnerabilities introduced through high-frequency modification. Equally important is cultural adaptation and cognitive recalibration within engineering teams, ensuring that human oversight evolves alongside AI-assisted acceleration rather than becoming marginalized by it. Corporate programming does not invalidate acceleration. It demands its disciplined governance.

Conclusion

Artificial Intelligence unquestionably accelerates software development, but acceleration without structural awareness is not progress; it is amplification. Empirical research on code churn, defect propagation, architectural erosion, and technical debt consistently demonstrates that velocity and stability are interdependent variables within complex socio-technical systems. When velocity is treated as an isolated performance metric, fragility accumulates beneath the surface of apparent productivity. The risk is not that AI generates imperfect code. Still, that acceleration reshapes feedback loops, cognitive oversight, architectural coherence, and risk exposure faster than governance models can evolve to keep pace. Velocity–Stability Coupling Theory reframes AI integration as a nonlinear systems problem rather than a tooling enhancement, rejecting the assumption that productivity gains are inherently stabilizing. In tightly coupled environments, minor deviations propagate disproportionately, and under high velocity, oversight saturates while drift accelerates. Treating AI as a neutral assistant obscures its role as a structural force multiplier, and failure to recognize these dynamic risks converts short-term efficiency into long-term brittleness.

Yet the path forward is not restraint for its own sake, but disciplined evolution. Controlled Acceleration Architecture offers a framework for intelligent speed by bounding velocity relative to absorptive capacity, calibrating epistemic trust, expanding validation infrastructure, and containing failure propagation. This approach does not oppose acceleration; it engineers it within stability envelopes that allow systems to adapt without fracturing. Software engineering has repeatedly demonstrated its capacity to recalibrate after destabilizing innovation, from the emergence of DevOps to the institutionalization of continuous integration and observability. AI integration represents a similar inflection point. The defining question is not how fast development can proceed, but whether the field is willing to understand and govern the structural consequences of movement itself. If velocity is deliberately managed rather than indiscriminately maximized, AI will not merely compress development cycles; it will enable more coherent, resilient software systems capable of evolving under continuous change rather than collapsing beneath it.

References

Bommasani, Rishi, et al. “On the Opportunities and Risks of Foundation Models.” ACM Conference on Fairness, Accountability, and Transparency, 2021, pp. 1–24.

Chen, Mark, et al. “Evaluating Large Language Models Trained on Code.” arXiv preprint arXiv:2107.03374, 2021.

Dekker, Sidney. Drift into Failure: From Hunting Broken Components to Understanding Complex Systems. Ashgate Publishing, 2011.

Doshi-Velez, Finale, and Been Kim. “Towards a Rigorous Science of Interpretable Machine Learning.” arXiv preprint arXiv:1702.08608, 2017.

Forsgren, Nicole, Jez Humble, and Gene Kim. Accelerate: The Science of Lean Software and DevOps: Building and Scaling High-Performing Technology Organizations. IT Revolution Press, 2018.

Hendrycks, Dan, and Kevin Gimpel. “A Baseline for Detecting Misclassified and Out-of-Distribution Examples in Neural Networks.” International Conference on Learning Representations, 2017.

Kim, Sunghun, et al. “An Empirical Study of Code Churn and Defects in Software.” IEEE International Conference on Software Maintenance, 2006, pp. 364–373.

Leveson, Nancy. Engineering a Safer World: Systems Thinking Applied to Safety. MIT Press, 2011.

Mäntylä, Mika V., and Casper Lassenius. “What Types of Defects Are Really Discovered in Code Reviews?” IEEE Transactions on Software Engineering, vol. 35, no. 3, 2009, pp. 430–448.

Nijkamp, Erik, et al. “CodeGen: An Open Large Language Model for Code with Multi-Turn Program Synthesis.” arXiv preprint arXiv:2203.13474, 2022.

Parasuraman, Raja, and Victor Riley. “Humans and Automation: Use, Misuse, Disuse, Abuse.” Human Factors, vol. 39, no. 2, 1997, pp. 230–253.

Parnas, David L. “On the Criteria To Be Used in Decomposing Systems into Modules.” Communications of the ACM, vol. 15, no. 12, 1972, pp. 1053–1058.

Perrow, Charles. Everyday Accidents: Living with High-Risk Technologies. Princeton University Press, 1984.

Rahman, Foyzur, et al. “Recalling the ‘Imprecision’ of Code Churn Metrics in Defect Prediction.” Empirical Software Engineering, vol. 18, no. 3, 2013, pp. 514–548.

Shaw, Mary, and David Garlan. Software Architecture: Perspectives on an Emerging Discipline. Prentice Hall, 1996.

Sculley, D., et al. “Hidden Technical Debt in Machine Learning Systems.” Advances in Neural Information Processing Systems, 2015.

Turner, Richard. “Software Safety and Software Systems.” IEEE Software, vol. 22, no. 3, 2005, pp. 30–37.

Widmer, Gerhard, and Miroslav Kubat. “Learning in the Presence of Concept Drift and Hidden Contexts.” Machine Learning, vol. 23, no. 1, 1996, pp. 69–101.

European Parliament and Council of the European Union. Artificial Intelligence Act. Official Journal of the European Union, 2024.

National Institute of Standards and Technology. Artificial Intelligence Risk Management Framework (AI RMF 1.0). U.S. Department of Commerce, 2023.